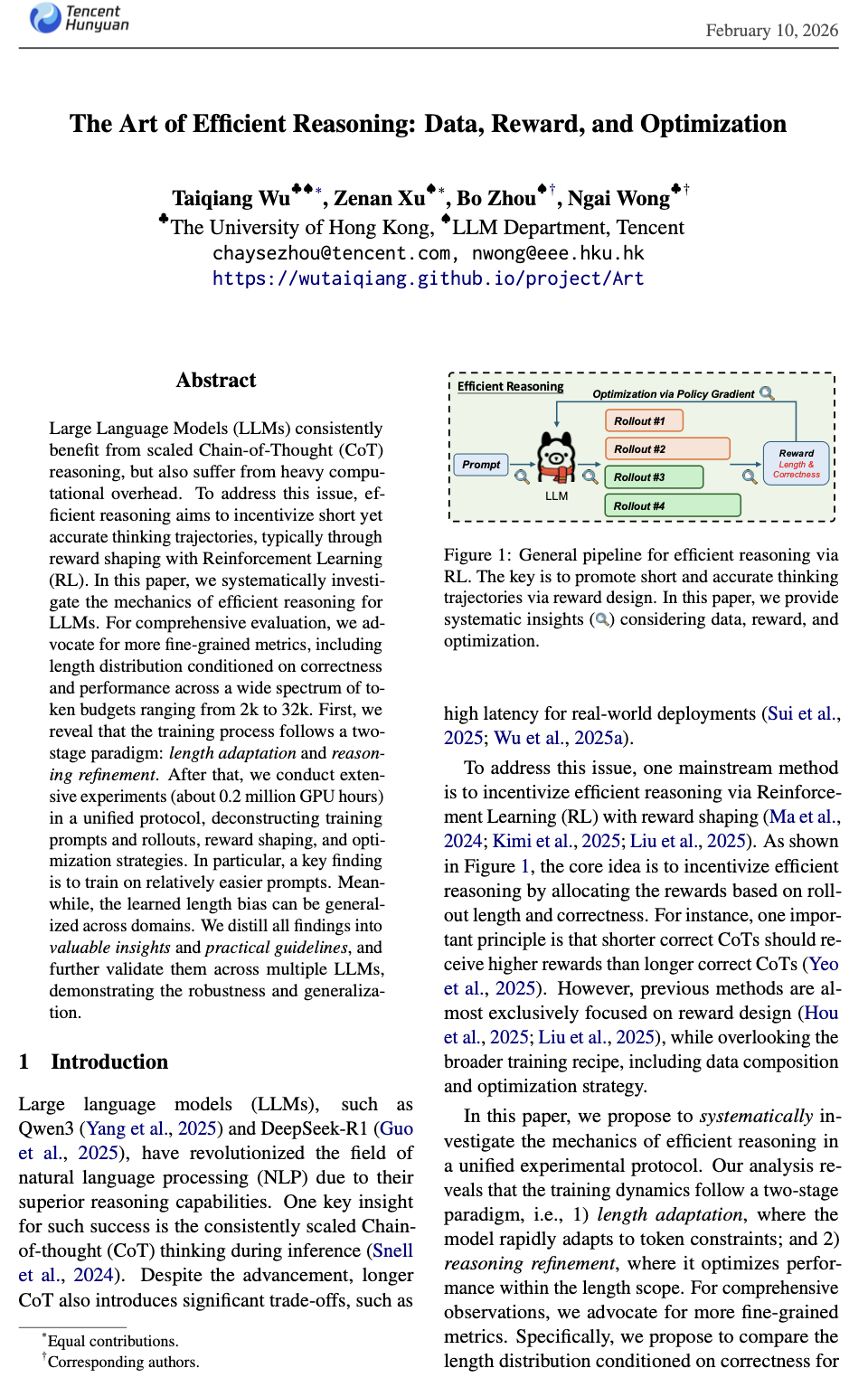

In this paper, we systematically investigate the mechanics of efficient reasoning for LLMs, which aims to incentivize short yet accurate thinking trajectories. One typical method is reward shaping, which can be formulated as:

$$reward_i:=f(c_i) \rightarrow reward_i:=g(c_i,l_i).$$

The key is to set \(reward_i\) based on both correctness \(c_i\) and length \(l_i\), rather than vanilla RL that relies on correctness \(c_i\) only.

Q1: How to evaluate a compressed LLM? Performance under low token budgets?

Answer: It is risky to evaluate an LLM only via performance under low budgets. Particularly when the truncation rate is high, the trained LLM tends to overfit to short output sequences and suffers from the reasoning collaspe issue. Consequently, the scaling law may fail, i.e., increasing the token budget yields only marginal gains. In contrast, the original LLM demonstrates substantial benefits from a scaled token budget. It is definitly not what we excepted. Ideally, we want the LLM to retain high-ceiling performance while shifting its output distribution to shorter token regions. Thus, we propose to evalute the LLM under a wide token-budget spectrum.

In this paper, we advocate more fine-grained metrics, including length distributions conditioned on correctness (length for correct and incorrect rollouts) and performance across a wide token-budget spectrum from 2k to 32k.

Q2: Can an LLM trained on domain A transfer well to domain B?

Answer: Fortunately, the learned length inductive bias can generalize across difficulties and domains. We attribute this to minimal perturbation of model distributions under RL optimization. We further report results on private benchamarks, and the results are consistent with our expectations. Please check the Appendix in the paper for more detailed results.

Takeaway #1: Two-stage Paradigm

Stage 1: Length Adaptation. The optimization of constraint satisfaction dominates this initial phase. Driven by the length penalty, the model rapidly adjusts its output distribution to avoid zero-reward truncation.

Stage 2: Reasoning Refinement. Once the rollout length stabilizes within the target budget, the training enters a stationary phase regarding length, shifting focus to performance optimization. In this stage, the length curves plateau, demonstrating that the model has successfully adapted to the hard constraints on output length.

The LLM tends to satisfy length constraints first, which is much easier than correctness. Thus the optimization trajectories can be approximated as \(max_{c_i}\left\{max_{l_i}\left[g(c_i,l_i)\right]\right\}\).

Takeaway #2: Insights on Training Recipes

We apply the truncation strategy to the DeepSeek-Distilled-Qwen-1.5B model. For rollouts sampled at \(L_R\), only those that are correct and shorter than \(L_T\) are considered positive samples.

Insight on Data: The key is to ensure sufficient and effective rewards. Training on easier prompts allows LLMs to focus on length reduction without compromising performance. Larger rollout \(N\) is better if computational resources allow.

Insight on Reward: Not penalizing overlong correct rollouts leads to higher performance, but also slightly longer outputs. Sampling at target length (\(L_R=L_T\)) achieves a better trade-off by avoiding the length trap. Typically, the positive rollouts are shorter than negative ones, which implicitly encourages the model to be short yet accurate.

Insight on Optimization: Appropriate staleness can accelerate convergence without harming accuracy (before 800 steps), but also introduces latent instability, manifested as rising entropy and uncontrolled length drift. We suggest the on policy strategy, especially for larger LLMs, which are more fragile.

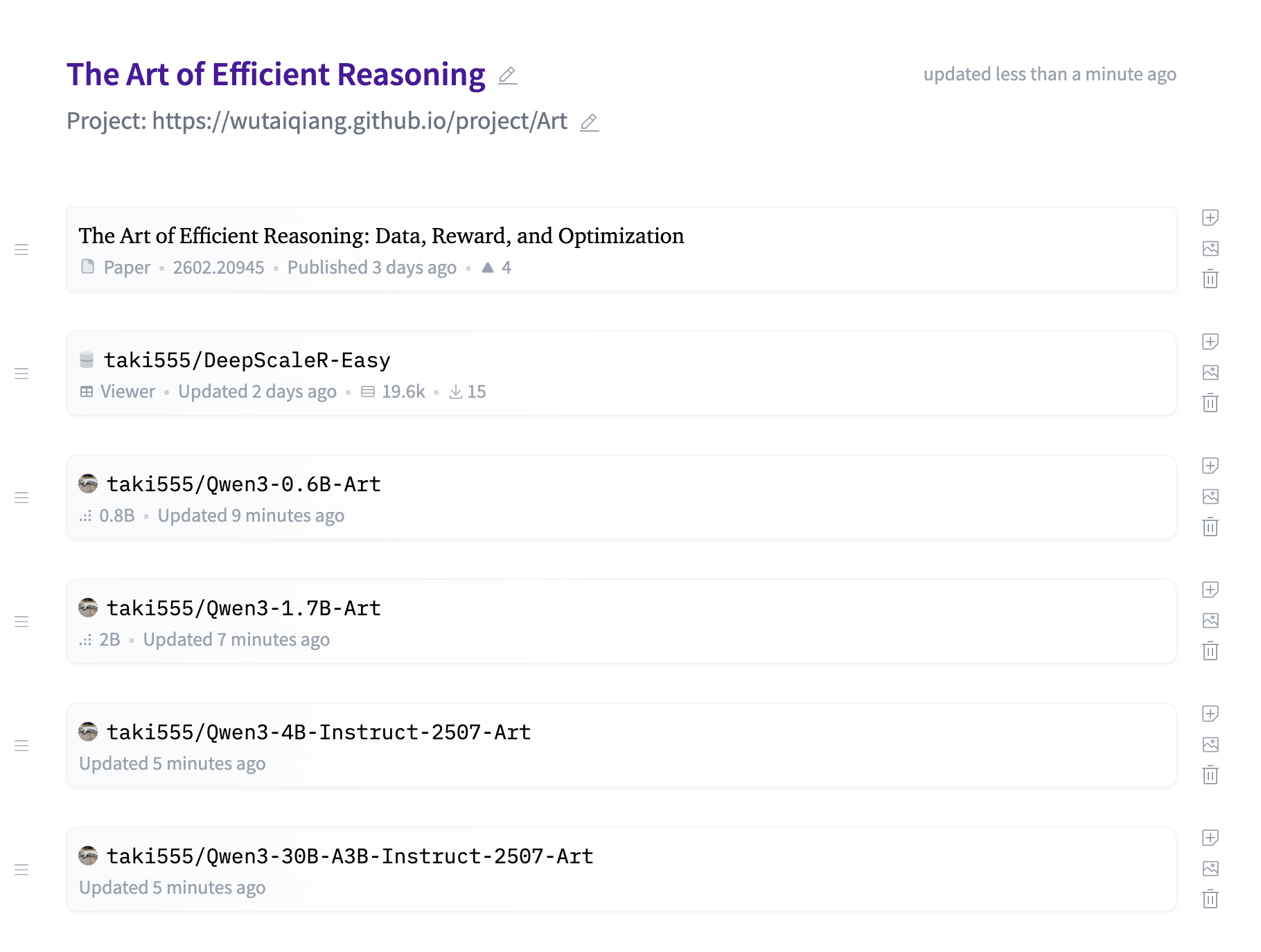

Takeaway #3: Compressed Qwen3 Models

We further apply our insights to Qwen3 models. The weights are open-sourced and can be found at here.

| Method | Mean@8↑ | Pass@8↑ | Length↓ |

|---|---|---|---|

| Qwen3-0.6B | |||

| Vanilla | 13.33 | 26.67 | 14.9k |

| Ours (step 640) | 24.58 (+11.25) | 36.67 (+10.00) | 8.9k (↓40.3%) |

| Qwen3-1.7B | |||

| Vanilla | 35.00 | 60.00 | 17.7k |

| Ours (step 560) | 38.75 (+3.75) | 60.00 | 11.2k (↓36.7%) |

| Qwen3-4B-Instruct-2507 | |||

| Vanilla | 45.42 | 66.67 | 9.1k |

| Ours (step 1440) | 46.67 (+1.25) | 70.00 (+3.33) | 4.8k (↓47.3%) |

| Qwen3-4B-Thinking-2507 | |||

| Vanilla | 75.83 | 90.00 | 20.9k |

| Ours (step 200) | 76.25 (+0.42) | 86.67 | 16.0k (↓23.4%) |

| Qwen3-8B | |||

| Vanilla | 65.83 | 86.67 | 17.9k |

| Ours (step 100) | 67.08 (+1.25) | 83.33 | 12.8k (↓28.5%) |

| Qwen3-30B-A3B-Instruct-2507 | |||

| Vanilla | 60.83 | 83.33 | 6.9k |

| Ours (step 600) | 60.83 | 76.67 | 5.1k (↓26.1%) |

| Qwen3-30B-A3B-Thinking-2507 | |||

| Vanilla | 84.17 | 96.67 | 17.3k |

| Ours (step 120) | 86.25 (+2.08) | 96.67 | 14.8k (↓14.5%) |

Table: Performance on AIME'25 for Qwen3 models.

- Rollout \(N\): As large as possible. We recommend 24.

- \(L_R\) and \(L_T\): A typical value is 8k. If the output length drops too fast, please increase them. Particularly, for Instruct model, try to set \(L_T\) slightly lower than \(L_R\) to push model be shorter.

bibliography

Taiqiang Wu, Zenan Xu, Bo Zhou, Ngai Wong. "The Art of Efficient Reasoning: Data, Reward, and Optimization." Arxiv, 2602.20945

bibtex

@article{wu2026art,

title={The Art of Efficient Reasoning: Data, Reward, and Optimization},

author={Taiqiang Wu and Zenan Xu and Bo Zhou and Ngai Wong},

year={2026},

url={https://arxiv.org/pdf/2602.20945}

}